Despite significant investment in account reconciliation software, many enterprise finance teams continue to close their books days behind schedule. The bottleneck is rarely the reconciliation engine. It is the quality, consistency, and structure of the data flowing into it.

The same constraint is now surfacing in AI adoption. Finance leaders are under growing pressure to deploy AI for forecasting, anomaly detection, and real-time decision-making. Yet, most initiatives stall not because of the model, but because of the data layer beneath it.

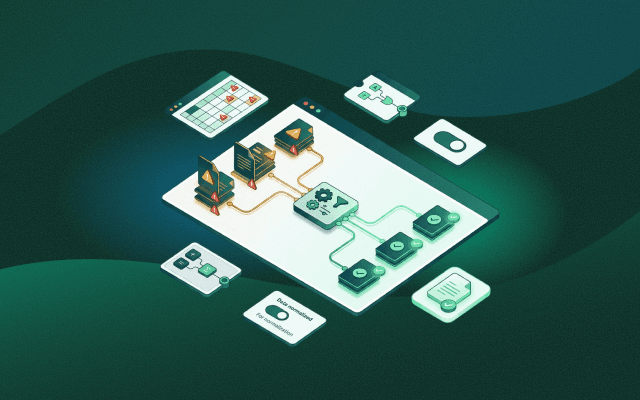

Platforms like Optimus Fintech are designed around this reality. Whether the priority is faster reconciliation or scalable AI, both depend on the same foundation; clean, structured, and continuously available financial data.

What is Data Fusion in Payment Operations

Data Fusion or Data preparation in reconciliation is the process of cleaning, standardizing, and validating transaction data before matching begins. It ensures that data from banks, payment processors, gateways, and ERPs can be compared accurately.

This includes:

- Standardizing date and time formats

- Normalizing transaction references and IDs

- Aligning currency formats and settlement structures

- Validating missing or malformed fields

Without this step, reconciliation systems generate false exceptions instead of meaningful insights.

More importantly, poorly prepared data cannot support AI systems, which rely on consistency and structure to produce reliable outputs.

Why Does Financial Reconciliation Take So Long

Reconciliation delays are usually caused by upstream data issues rather than matching inefficiencies.

Common reasons include:

- Inconsistent data formats across systems

- Manual pre-processing in spreadsheets

- Dependency on IT for data ingestion and mapping

- Frequent changes in payment formats and schemas

Finance teams often spend a majority of their close cycle preparing data before reconciliation even begins. This same inefficiency also delays AI initiatives, since models cannot operate on unstable or fragmented datasets.

The Real Bottleneck in the Reconciliation Process

Financial reconciliation does not start with matching. It starts with data readiness. Enterprise payment data flows in from multiple systems, each with its own structure and logic. A single transaction may appear differently across a bank file, a gateway report, and an ERP entry.

ISO 20022 has increased both the richness and variability of payment data. While it enables better structuring, it also introduces inconsistencies in how fields are populated across institutions. Without normalization, these differences appear as exceptions during reconciliation and as noise for AI systems.

The 3 Stages of Modern Reconciliation

To understand where delays occur, it helps to break reconciliation into three stages:

- Data ingestion: Collecting data from banks, processors, gateways, and internal systems

- Data normalization: Standardizing formats, mapping fields, and validating inputs

- Matching and exception handling: Comparing transactions and resolving discrepancies

Most organizations focus on matching. The real leverage lies in normalization. This is also the stage that determines whether the data is usable for AI.

How Data Readiness Impacts AI Adoption in Finance

Many finance teams approach AI from the top down by investing in tools and models. The real constraint, however, sits at the data layer.

AI in finance depends on:

- Consistent historical data

- Standardized data structures

- Real-time or near real-time availability

When these conditions are not met:

- Anomaly detection systems generate false alerts.

- Forecasting models produce unreliable outputs.

- Automation workflows break under inconsistent inputs.

Data fusion creates the foundation that makes AI viable. Without it, AI initiatives struggle to move beyond experimentation.

What Finance-Led Data Fusion Means

Finance-led data fusion/preparation shifts control of data ingestion and normalization from IT to finance teams.

With no-code tools, finance users can:

- Configure data pipelines

- Map fields across systems

- Define validation rules

- Adapt quickly to format changes

This does not replace IT governance. Infrastructure and security remain under IT. What changes are in execution speed and operational control?

Instead of waiting for development cycles, finance teams can respond in real time. This agility is critical not only for reconciliation but also for maintaining AI-ready data pipelines.